The Cross Product

In mathematics, the cross product or vector product (occasionally directed area product, to emphasize its geometric significance) is a binary operation on two vectors in a three-dimensional oriented Euclidean vector space (named here ), and is denoted by the symbol . Given two linearly independent vectors a and b, the cross product, a × b (read "a cross b"), is a vector that is perpendicular to both a and b, and thus normal to the plane containing them. It has many applications in mathematics, physics, engineering, and computer programming. It should not be confused with the dot product (projection product).

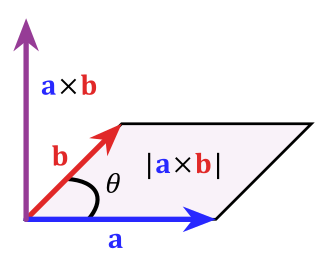

If two vectors have the same direction or have the exact opposite direction from each other (that is, they are not linearly independent), or if either one has zero length, then their cross product is zero. More generally, the magnitude of the product equals the area of a parallelogram with the vectors for sides; in particular, the magnitude of the product of two perpendicular vectors is the product of their lengths.

The cross product is anticommutative (that is, a × b = − b × a) and is distributive over addition (that is, a × (b + c) = a × b + a × c). The space together with the cross product is an algebra over the real numbers, which is neither commutative nor associative, but is a Lie algebra with the cross product being the Lie bracket.

Like the dot product, it depends on the metric of Euclidean space, but unlike the dot product, it also depends on a choice of orientation (or "handedness") of the space (it's why an oriented space is needed). In connection with the cross product, the exterior product of vectors can be used in arbitrary dimensions (with a bivector or 2-form result) and is independent of the orientation of the space.

The product can be generalized in various ways, using the orientation and metric structure just as for the traditional 3-dimensional cross product, one can, in n dimensions, take the product of n − 1 vectors to produce a vector perpendicular to all of them. But if the product is limited to non-trivial binary products with vector results, it exists only in three and seven dimensions.

Contents

Definition

The cross product of two vectors a and b is defined only in three-dimensional space and is denoted by a × b. In physics and applied mathematics, the wedge notation a ∧ b is often used (in conjunction with the name vector product), although in pure mathematics such notation is usually reserved for just the exterior product, an abstraction of the vector product to n dimensions.

The cross product a × b is defined as a vector c that is perpendicular (orthogonal) to both a and b, with a direction given by the right-hand rule and a magnitude equal to the area of the parallelogram that the vectors span.

The cross product is defined by the formula

where:

- θ is the angle between a and b in the plane containing them (hence, it is between 0° and 180°)

- ‖a‖ and ‖b‖ are the magnitudes of vectors a and b

- and n is a unit vector perpendicular to the plane containing a and b, in the direction given by the right-hand rule (illustrated).

If the vectors a and b are parallel (that is, the angle θ between them is either 0° or 180°), by the above formula, the cross product of a and b is the zero vector 0.

Direction

By convention, the direction of the vector n is given by the right-hand rule, where one simply points the forefinger of the right hand in the direction of a and the middle finger in the direction of b. Then, the vector n is coming out of the thumb (see the adjacent picture). Using this rule implies that the cross product is anti-commutative; that is, b × a = −(a × b). By pointing the forefinger toward b first, and then pointing the middle finger toward a, the thumb will be forced in the opposite direction, reversing the sign of the product vector.

As the cross product operator depends on the orientation of the space (as explicit in the definition above), the cross product of two vectors is not a "true" vector, but a pseudovector.

Names

In 1881, Josiah Willard Gibbs, and independently Oliver Heaviside, introduced both the dot product and the cross product using a period (a . b) and an "x" (a x b), respectively, to denote them.

In 1877, to emphasize the fact that the result of a dot product is a scalar while the result of a cross product is a vector, William Kingdon Clifford coined the alternative names scalar product and vector product for the two operations. These alternative names are still widely used in the literature.

Both the cross notation (a × b) and the name cross product were possibly inspired by the fact that each scalar component of a × b is computed by multiplying non-corresponding components of a and b. Conversely, a dot product a ⋅ b involves multiplications between corresponding components of a and b. As explained below, the cross product can be expressed in the form of a determinant of a special 3 × 3 matrix. According to Sarrus's rule, this involves multiplications between matrix elements identified by crossed diagonals.

Computing

Coordinate notation

If (i, j,k) is a positively oriented orthonormal basis, the basis vectors satisfy the following equalities

which imply, by the anticommutativity of the cross product, that

The anticommutativity of the cross product (and the obvious lack of linear independence) also implies that

- (the zero vector).

These equalities, together with the distributivity and linearity of the cross product (though neither follows easily from the definition given above), are sufficient to determine the cross product of any two vectors a and b. Each vector can be defined as the sum of three orthogonal components parallel to the standard basis vectors:

Their cross product a × b can be expanded using distributivity:

This can be interpreted as the decomposition of a × b into the sum of nine simpler cross products involving vectors aligned with i, j, or k. Each one of these nine cross products operates on two vectors that are easy to handle as they are either parallel or orthogonal to each other. From this decomposition, by using the above-mentioned equalities and collecting similar terms, we obtain:

meaning that the three scalar components of the resulting vector s = s1i + s2j + s3k = a × b are

Using column vectors, we can represent the same result as follows:

Matrix notation

The cross product can also be expressed as the formal determinant:

This determinant can be computed using Sarrus's rule or cofactor expansion. Using Sarrus's rule, it expands to

Using cofactor expansion along the first row instead, it expands to

which gives the components of the resulting vector directly.

Using Levi-Civita tensors

- In any basis, the cross-product is given by the tensorial formula where is the covariant Levi-Civita tensor (we note the position of the indices). That corresponds to the intrinsic formula given here.

- In an orthonormal basis having the same orientation as the space, is given by the pseudo-tensorial formula where is the Levi-Civita symbol (which is a pseudo-tensor). That’s the formula used for everyday physics but it works only for this special choice of basis.

- In any orthonormal basis, is given by the pseudo-tensorial formula where indicates whether the basis has the same orientation as the space or not.

The latter formula avoids having to change the orientation of the space when we inverse an orthonormal basis.

Properties

Geometric meaning

The magnitude of the cross product can be interpreted as the positive area of the parallelogram having a and b as sides (see Figure 1):

Indeed, one can also compute the volume V of a parallelepiped having a, b and c as edges by using a combination of a cross product and a dot product, called scalar triple product (see Figure 2):

Since the result of the scalar triple product may be negative, the volume of the parallelepiped is given by its absolute value:

Because the magnitude of the cross product goes by the sine of the angle between its arguments, the cross product can be thought of as a measure of perpendicularity in the same way that the dot product is a measure of parallelism. Given two unit vectors, their cross product has a magnitude of 1 if the two are perpendicular and a magnitude of zero if the two are parallel. The dot product of two unit vectors behaves just oppositely: it is zero when the unit vectors are perpendicular and 1 if the unit vectors are parallel.

Unit vectors enable two convenient identities: the dot product of two unit vectors yields the cosine (which may be positive or negative) of the angle between the two unit vectors. The magnitude of the cross product of the two unit vectors yields the sine (which will always be positive).

Algebraic properties

If the cross product of two vectors is the zero vector (that is, a × b = 0), then either one or both of the inputs is the zero vector, (a = 0 or b = 0) or else they are parallel or antiparallel (a ∥ b) so that the sine of the angle between them is zero (θ = 0° or θ = 180° and sin θ = 0).

The self cross product of a vector is the zero vector:

The cross product is anticommutative,

distributive over addition,

and compatible with scalar multiplication so that

It is not associative, but satisfies the Jacobi identity:

Distributivity, linearity and Jacobi identity show that the R3 vector space together with vector addition and the cross product forms a Lie algebra, the Lie algebra of the real orthogonal group in 3 dimensions, SO(3). The cross product does not obey the cancellation law; that is, a × b = a × c with a ≠ 0 does not imply b = c, but only that:

This can be the case where b and c cancel, but additionally where a and b − c are parallel; that is, they are related by a scale factor t, leading to:

for some scalar t.

If, in addition to a × b = a × c and a ≠ 0 as above, it is the case that a ⋅ b = a ⋅ c then

As b − c cannot be simultaneously parallel (for the cross product to be 0) and perpendicular (for the dot product to be 0) to a, it must be the case that b and c cancel: b = c.

From the geometrical definition, the cross product is invariant under proper rotations about the axis defined by a × b. In formulae:

- , where is a rotation matrix with .

More generally, the cross product obeys the following identity under matrix transformations:

where is a 3-by-3 matrix and is the transpose of the inverse and is the cofactor matrix. It can be readily seen how this formula reduces to the former one if is a rotation matrix.

The cross product of two vectors lies in the null space of the 2 × 3 matrix with the vectors as rows:

For the sum of two cross products, the following identity holds:

Differentiation

The product rule of differential calculus applies to any bilinear operation, and therefore also to the cross product:

where a and b are vectors that depend on the real variable t.

Triple product expansion

The cross product is used in both forms of the triple product. The scalar triple product of three vectors is defined as

It is the signed volume of the parallelepiped with edges a, b and c and as such the vectors can be used in any order that's an even permutation of the above ordering. The following therefore are equal:

The vector triple product is the cross product of a vector with the result of another cross product, and is related to the dot product by the following formula

The mnemonic "BAC minus CAB" is used to remember the order of the vectors in the right hand member. This formula is used in physics to simplify vector calculations. A special case, regarding gradients and useful in vector calculus, is

where ∇2 is the vector Laplacian operator.

Other identities relate the cross product to the scalar triple product:

where I is the identity matrix.

Alternative formulation

The cross product and the dot product are related by:

The right-hand side is the Gram determinant of a and b, the square of the area of the parallelogram defined by the vectors. This condition determines the magnitude of the cross product. Namely, since the dot product is defined, in terms of the angle θ between the two vectors, as:

the above given relationship can be rewritten as follows:

Invoking the Pythagorean trigonometric identity one obtains:

which is the magnitude of the cross product expressed in terms of θ, equal to the area of the parallelogram defined by a and b.

The combination of this requirement and the property that the cross product be orthogonal to its constituents a and b provides an alternative definition of the cross product.

Lagrange's identity

The relation:

can be compared with another relation involving the right-hand side, namely Lagrange's identity expressed as:

where a and b may be n-dimensional vectors. This also shows that the Riemannian volume form for surfaces is exactly the surface element from vector calculus. In the case where n = 3, combining these two equations results in the expression for the magnitude of the cross product in terms of its components:

The same result is found directly using the components of the cross product found from:

In R3, Lagrange's equation is a special case of the multiplicativity |vw| = |v||w| of the norm in the quaternion algebra.

It is a special case of another formula, also sometimes called Lagrange's identity, which is the three dimensional case of the Binet–Cauchy identity:

If a = c and b = d this simplifies to the formula above.

Infinitesimal generators of rotations

The cross product conveniently describes the infinitesimal generators of rotations in R3. Specifically, if n is a unit vector in R3 and R(φ, n) denotes a rotation about the axis through the origin specified by n, with angle φ (measured in radians, counterclockwise when viewed from the tip of n), then

for every vector x in R3. The cross product with n therefore describes the infinitesimal generator of the rotations about n. These infinitesimal generators form the Lie algebra so(3) of the rotation group SO(3), and we obtain the result that the Lie algebra R3 with cross product is isomorphic to the Lie algebra so(3).

Alternative ways to compute

Conversion to matrix multiplication

The vector cross product also can be expressed as the product of a skew-symmetric matrix and a vector:

where superscript T refers to the transpose operation, and [a]× is defined by:

The columns [a]×,i of the skew-symmetric matrix for a vector a can be also obtained by calculating the cross product with unit vectors. That is,

or

where is the outer product operator.

Also, if a is itself expressed as a cross product:

then

Proof by substitution

Evaluation of the cross product gives

Hence, the left hand side equals

Now, for the right hand side,

And its transpose is

Evaluation of the right hand side gives

Comparison shows that the left hand side equals the right hand side.

This result can be generalized to higher dimensions using geometric algebra. In particular in any dimension bivectors can be identified with skew-symmetric matrices, so the product between a skew-symmetric matrix and vector is equivalent to the grade-1 part of the product of a bivector and vector. In three dimensions bivectors are dual to vectors so the product is equivalent to the cross product, with the bivector instead of its vector dual. In higher dimensions the product can still be calculated but bivectors have more degrees of freedom and are not equivalent to vectors.

This notation is also often much easier to work with, for example, in epipolar geometry.

From the general properties of the cross product follows immediately that

- and

and from fact that [a]× is skew-symmetric it follows that

The above-mentioned triple product expansion (bac–cab rule) can be easily proven using this notation.

As mentioned above, the Lie algebra R3 with cross product is isomorphic to the Lie algebra so(3), whose elements can be identified with the 3×3 skew-symmetric matrices. The map a → [a]× provides an isomorphism between R3 and so(3). Under this map, the cross product of 3-vectors corresponds to the commutator of 3x3 skew-symmetric matrices.

Matrix conversion for cross product with canonical base vectors

Denoting with the -th canonical base vector, the cross product of a generic vector with is given by: , where

These matrices share the following properties:

- (skew-symmetric);

- Both trace and determinant are zero;

- ;

- (see below);

The orthogonal projection matrix of a vector is given by . The projection matrix onto the orthogonal complement is given by , where is the identity matrix. For the special case of , it can be verified that

For other properties of orthogonal projection matrices, see projection (linear algebra).

Index notation for tensors

The cross product can alternatively be defined in terms of the Levi-Civita tensor Eijk and a dot product ηmi, which are useful in converting vector notation for tensor applications:

where the indices correspond to vector components. This characterization of the cross product is often expressed more compactly using the Einstein summation convention as

in which repeated indices are summed over the values 1 to 3.

In a positively-oriented orthonormal basis ηmi = δmi (the Kronecker delta) and (the Levi-Civita symbol). In that case, this representation is another form of the skew-symmetric representation of the cross product:

In classical mechanics: representing the cross product by using the Levi-Civita symbol can cause mechanical symmetries to be obvious when physical systems are isotropic. (An example: consider a particle in a Hooke's Law potential in three-space, free to oscillate in three dimensions; none of these dimensions are "special" in any sense, so symmetries lie in the cross-product-represented angular momentum, which are made clear by the abovementioned Levi-Civita representation).

Mnemonic

The word "xyzzy" can be used to remember the definition of the cross product.

If

where:

then:

The second and third equations can be obtained from the first by simply vertically rotating the subscripts, x → y → z → x. The problem, of course, is how to remember the first equation, and two options are available for this purpose: either to remember the relevant two diagonals of Sarrus's scheme (those containing i), or to remember the xyzzy sequence.

Since the first diagonal in Sarrus's scheme is just the main diagonal of the above-mentioned 3×3 matrix, the first three letters of the word xyzzy can be very easily remembered.

Cross visualization

Similarly to the mnemonic device above, a "cross" or X can be visualized between the two vectors in the equation. This may be helpful for remembering the correct cross product formula.

If

then:

If we want to obtain the formula for we simply drop the and from the formula, and take the next two components down:

When doing this for the next two elements down should "wrap around" the matrix so that after the z component comes the x component. For clarity, when performing this operation for , the next two components should be z and x (in that order). While for the next two components should be taken as x and y.

For then, if we visualize the cross operator as pointing from an element on the left to an element on the right, we can take the first element on the left and simply multiply by the element that the cross points to in the right hand matrix. We then subtract the next element down on the left, multiplied by the element that the cross points to here as well. This results in our formula –

We can do this in the same way for and to construct their associated formulas.

Applications

The cross product has applications in various contexts. For example, it is used in computational geometry, physics and engineering. A non-exhaustive list of examples follows.

Computational geometry

The cross product appears in the calculation of the distance of two skew lines (lines not in the same plane) from each other in three-dimensional space.

The cross product can be used to calculate the normal for a triangle or polygon, an operation frequently performed in computer graphics. For example, the winding of a polygon (clockwise or anticlockwise) about a point within the polygon can be calculated by triangulating the polygon (like spoking a wheel) and summing the angles (between the spokes) using the cross product to keep track of the sign of each angle.

In computational geometry of the plane, the cross product is used to determine the sign of the acute angle defined by three points and . It corresponds to the direction (upward or downward) of the cross product of the two coplanar vectors defined by the two pairs of points and . The sign of the acute angle is the sign of the expression

which is the signed length of the cross product of the two vectors.

In the "right-handed" coordinate system, if the result is 0, the points are collinear; if it is positive, the three points constitute a positive angle of rotation around from to , otherwise a negative angle. From another point of view, the sign of tells whether lies to the left or to the right of line

The cross product is used in calculating the volume of a polyhedron such as a tetrahedron or parallelepiped.

Angular momentum and torque

The angular momentum L of a particle about a given origin is defined as:

where r is the position vector of the particle relative to the origin, p is the linear momentum of the particle.

In the same way, the moment M of a force FB applied at point B around point A is given as:

In mechanics the moment of a force is also called torque and written as

Since position r, linear momentum p and force F are all true vectors, both the angular momentum L and the moment of a force M are pseudovectors or axial vectors.

Rigid body

The cross product frequently appears in the description of rigid motions. Two points P and Q on a rigid body can be related by:

where is the point's position, is its velocity and is the body's angular velocity.

Since position and velocity are true vectors, the angular velocity is a pseudovector or axial vector.

Lorentz force

The cross product is used to describe the Lorentz force experienced by a moving electric charge qe:

Since velocity v, force F and electric field E are all true vectors, the magnetic field B is a pseudovector.

Other

In vector calculus, the cross product is used to define the formula for the vector operator curl.

The trick of rewriting a cross product in terms of a matrix multiplication appears frequently in epipolar and multi-view geometry, in particular when deriving matching constraints.

As an external product

The cross product can be defined in terms of the exterior product. It can be generalized to an external product in other than three dimensions. This view allows for a natural geometric interpretation of the cross product. In exterior algebra the exterior product of two vectors is a bivector. A bivector is an oriented plane element, in much the same way that a vector is an oriented line element. Given two vectors a and b, one can view the bivector a ∧ b as the oriented parallelogram spanned by a and b. The cross product is then obtained by taking the Hodge star of the bivector a ∧ b, mapping 2-vectors to vectors:

This can be thought of as the oriented multi-dimensional element "perpendicular" to the bivector. Only in three dimensions is the result an oriented one-dimensional element – a vector – whereas, for example, in four dimensions the Hodge dual of a bivector is two-dimensional – a bivector. So, only in three dimensions can a vector cross product of a and b be defined as the vector dual to the bivector a ∧ b: it is perpendicular to the bivector, with orientation dependent on the coordinate system's handedness, and has the same magnitude relative to the unit normal vector as a ∧ b has relative to the unit bivector; precisely the properties described above.

Handedness

Consistency

When physics laws are written as equations, it is possible to make an arbitrary choice of the coordinate system, including handedness. One should be careful to never write down an equation where the two sides do not behave equally under all transformations that need to be considered. For example, if one side of the equation is a cross product of two polar vectors, one must take into account that the result is an axial vector. Therefore, for consistency, the other side must also be an axial vector. More generally, the result of a cross product may be either a polar vector or an axial vector, depending on the type of its operands (polar vectors or axial vectors). Namely, polar vectors and axial vectors are interrelated in the following ways under application of the cross product:

- polar vector × polar vector = axial vector

- axial vector × axial vector = axial vector

- polar vector × axial vector = polar vector

- axial vector × polar vector = polar vector

or symbolically

- polar × polar = axial

- axial × axial = axial

- polar × axial = polar

- axial × polar = polar

Because the cross product may also be a polar vector, it may not change direction with a mirror image transformation. This happens, according to the above relationships, if one of the operands is a polar vector and the other one is an axial vector (e.g., the cross product of two polar vectors). For instance, a vector triple product involving three polar vectors is a polar vector.

A handedness-free approach is possible using exterior algebra.

The paradox of the orthonormal basis

Let (i, j,k) be an orthonormal basis. The vectors i, j and k don't depend on the orientation of the space. They can even be defined in the absence of any orientation. They can not therefore be axial vectors. But if i and j are polar vectors then k is an axial vector for i × j = k or j × i = k. This is a paradox.

"Axial" and "polar" are physical qualifiers for physical vectors; that is, vectors which represent physical quantities such as the velocity or the magnetic field. The vectors i, j and k are mathematical vectors, neither axial nor polar. In mathematics, the cross-product of two vectors is a vector. There is no contradiction.

Resources

- The Cross Product, OpenStax

- Cross Product, Cornell University

Licensing

Content obtained and/or adapted from:

- Cross product, Wikipedia under a CC BY-SA license

![{\displaystyle {\begin{aligned}\mathbf {a} \times \mathbf {b} =[\mathbf {a} ]_{\times }\mathbf {b} &={\begin{bmatrix}\,0&\!-a_{3}&\,\,a_{2}\\\,\,a_{3}&0&\!-a_{1}\\-a_{2}&\,\,a_{1}&\,0\end{bmatrix}}{\begin{bmatrix}b_{1}\\b_{2}\\b_{3}\end{bmatrix}}\\\mathbf {a} \times \mathbf {b} ={[\mathbf {b} ]_{\times }}^{\mathrm {\!\!T} }\mathbf {a} &={\begin{bmatrix}\,0&\,\,b_{3}&\!-b_{2}\\-b_{3}&0&\,\,b_{1}\\\,\,b_{2}&\!-b_{1}&\,0\end{bmatrix}}{\begin{bmatrix}a_{1}\\a_{2}\\a_{3}\end{bmatrix}},\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/77eaf3e139944a22bc3543de85a65d2d280547c6)

![{\displaystyle [\mathbf {a} ]_{\times }{\stackrel {\rm {def}}{=}}{\begin{bmatrix}\,\,0&\!-a_{3}&\,\,\,a_{2}\\\,\,\,a_{3}&0&\!-a_{1}\\\!-a_{2}&\,\,a_{1}&\,\,0\end{bmatrix}}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/614cc7fd18f2f2e212803822f31acb2505c98c89)

![{\displaystyle [\mathbf {a} ]_{\times ,i}=\mathbf {a} \times \mathbf {{\hat {e}}_{i}} ,\;i\in \{1,2,3\}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/74baaa1f6814e02fb133911b2bbab966485a3806)

![{\displaystyle [\mathbf {a} ]_{\times }=\sum _{i=1}^{3}\left(\mathbf {a} \times \mathbf {{\hat {e}}_{i}} \right)\otimes \mathbf {{\hat {e}}_{i}} ,}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fb0381c1581881a166e2f4e9cefe0b236265eefd)

![{\displaystyle [\mathbf {a} ]_{\times }=\mathbf {d} \mathbf {c} ^{\mathrm {T} }-\mathbf {c} \mathbf {d} ^{\mathrm {T} }.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d7bebe8181aeb49a3e8987339594fd7de7c454a9)

![{\displaystyle [\mathbf {a} ]_{\times }={\begin{bmatrix}0&c_{2}d_{1}-c_{1}d_{2}&c_{3}d_{1}-c_{1}d_{3}\\c_{1}d_{2}-c_{2}d_{1}&0&c_{3}d_{2}-c_{2}d_{3}\\c_{1}d_{3}-c_{3}d_{1}&c_{2}d_{3}-c_{3}d_{2}&0\end{bmatrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3ff95aa2908dc95252f1a28c8a9167458c98c993)

![{\displaystyle [\mathbf {a} ]_{\times }\,\mathbf {a} =\mathbf {0} }](https://wikimedia.org/api/rest_v1/media/math/render/svg/6e918b623a3b34134199284e350a5a06f8fe0305)

![{\displaystyle \mathbf {a} ^{\mathrm {T} }\,[\mathbf {a} ]_{\times }=\mathbf {0} }](https://wikimedia.org/api/rest_v1/media/math/render/svg/3cd1a98ffd5ab228553c458345bb26af8422bb43)

![{\displaystyle \mathbf {b} ^{\mathrm {T} }\,[\mathbf {a} ]_{\times }\,\mathbf {b} =0.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c23cfb35b83ca69742e7da1381a7477a18d04e4d)

![{\displaystyle [\varepsilon _{ijk}a^{j}]=[\mathbf {a} ]_{\times }.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/063b837f18afcf9a012a49f73f4b4c2e350e99e9)